Understanding AI Agents vs LLMs: Key Differences Explained

Comparison | Jan 15, 2026 | Patricia Angeline

The artificial intelligence landscape has become crowded with terminology that often gets used interchangeably. AI agents and large language models represent fundamentally different technologies, yet the distinction between them remains unclear to many. Understanding what is an AI agent vs LLM matters because choosing the wrong technology can mean the difference between simple text generation and sophisticated automation that transforms business operations.

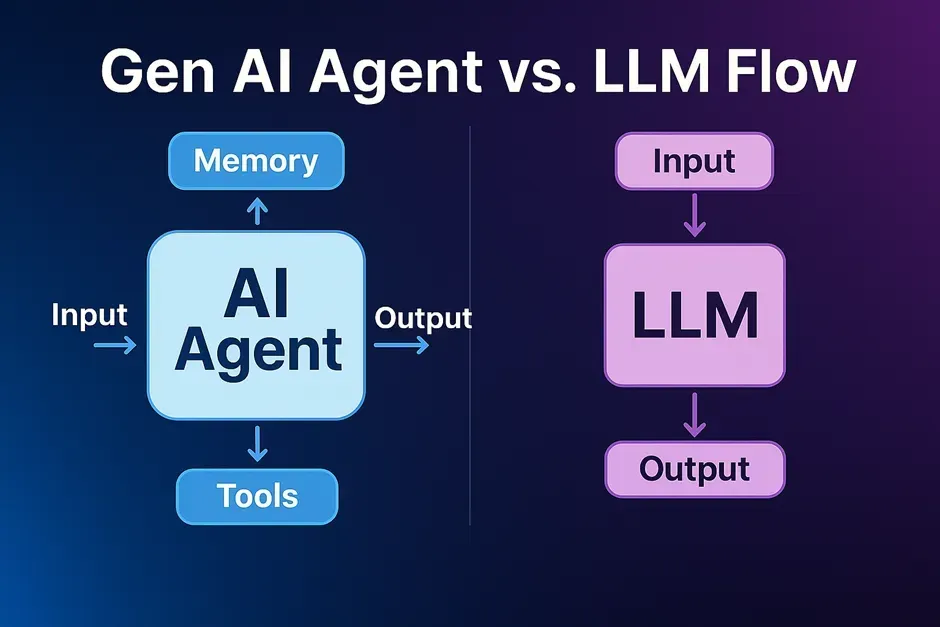

An LLM generates text based on patterns learned from training data. An AI agent takes autonomous actions to achieve specific goals. While modern AI systems increasingly combine both capabilities, recognizing their core differences helps businesses deploy the right solution for their needs. Agentic AI builds upon large language model foundations by adding the ability to perceive environments, make decisions, and execute tasks without constant human intervention.

What Are AI Agents and LLMs in Agentic AI?

What is a Large Language Model (LLM)?

A large language model processes and generates text by predicting what comes next based on vast amounts of text data it has seen during training. These systems excel at understanding context within conversations, producing human-like responses, and handling various language tasks from translation to summarization.

LLMs operate reactively. They wait for prompts, process the input, and generate responses. Each interaction starts fresh unless explicitly provided with previous context. The model doesn't remember past conversations or learn from new interactions during deployment.

Key characteristics of LLMs:

Trained on vast datasets containing billions of text examples

Generate coherent, contextually relevant responses

Require explicit prompting to produce output

Stateless operation without persistent memory

Excel at natural language understanding and generation

Examples include GPT from OpenAI, Claude, and other foundation models that power conversational AI applications.

What is an AI Agent and How Does Agentic AI Work?

An AI agent operates as an autonomous system designed to perceive its environment, reason about information, take actions, and learn from outcomes. Unlike passive text generators, agentic systems actively work toward predefined goals through independent decision-making.

The agentic AI workflow follows a continuous cycle. The agent observes its environment or receives input, analyzes the situation using its reasoning capabilities, decides on appropriate actions, executes those actions, and evaluates results. This process repeats autonomously until the agent achieves its objective or determines the task cannot be completed.

Core components of AI agents:

Perception systems that monitor environments or data streams

Reasoning engines that often use LLMs for understanding and planning

Action mechanisms that interact with external systems

Memory structures that retain context across interactions

Learning capabilities that improve performance over time

Examples include autonomous customer support systems that handle inquiries from initial contact through resolution, and workflow automation platforms that orchestrate complex tasks across multiple business systems.

How AI Agents Integrate Large Language Models

Modern AI agents frequently use LLMs as their reasoning engine. The large language model provides natural language processing capabilities, understanding user intent and generating human-like communication. However, the agent wraps this LLM core with additional layers that enable autonomous operation.

This integration represents the evolution from passive to active artificial intelligence. A standalone LLM waits for instructions. An agentic system powered by an LLM can interpret goals, break down complex problems into steps, decide which actions to take, and execute those actions through function calling.

The synergy between language understanding and autonomy creates powerful AI solutions. The LLM handles communication and reasoning while the agentic architecture enables the system to interact with databases, trigger workflows, and integrate with business tools.

Explore platforms that combine LLM capabilities with agentic features for comprehensive business automation at KingCaller.ai

Key Differences Between AI Agents and LLMs in Real-World Applications

Autonomous AI Agent Architecture vs LLM Structure

An LLM consists primarily of neural networks trained to predict and generate text. The architecture focuses on processing sequences of tokens and producing coherent outputs based on learned patterns from training data.

Agentic AI employs multi-component systems with distinct layers for planning, execution, and monitoring. These systems incorporate function calling mechanisms that allow the AI agent to use external tools, query databases, and trigger actions in connected platforms.

While an LLM processes information, an agentic system can retrieve information from an external knowledge base, update records, schedule tasks, and coordinate workflows.

Memory management differs significantly:

LLMs maintain context only within the current conversation window

AI agents often implement persistent memory that tracks information across sessions

Agentic systems can build and reference knowledge bases over time

Traditional LLMs require full context to be provided with each prompt

LLM Capabilities vs Agentic AI Automation

LLMs excel at text-centric tasks. They generate content, translate languages, summarize documents, answer questions, and engage in conversations. Their strength lies in understanding and producing natural language with human-like quality.

AI agents automate end-to-end processes. They integrate systems, orchestrate complex tasks, make decisions based on real-world data, and execute multi-step workflows. An agentic system doesn't just respond to queries; it actively works to complete objectives.

Consider the difference between chatbots and AI chatbots. A basic chatbot powered by an LLM converses naturally but remains stuck in a loop of question and answer. An AI chatbot with agentic capabilities can understand a customer support request, check order status in a database, process a return, update the CRM, and send confirmation, all autonomously.

Real-World Use Cases: Chatbots, Automation, and Function Calling

LLM applications focus on language tasks:

Content creation for marketing and communications

Document analysis and summarization

Language translation across multiple languages

Question answering within conversational interfaces

Agentic AI applications extend into operational automation:

Customer service systems that resolve issues by accessing and updating multiple systems

Appointment scheduling assistants that check availability, book slots, and send confirmations

Procurement agents that monitor inventory, generate purchase orders, and track deliveries

Workflow assistants that coordinate tasks across teams and platforms

Proactive monitoring systems that detect issues and trigger responses

Deployment approaches differ substantially. Integrating an LLM typically involves API calls that send text and receive generated responses. Deploying AI agents requires connecting to business systems, defining function calling protocols, establishing security permissions, and configuring automation workflows.

See how agentic AI handles customer interactions beyond simple text responses

Best Practices: When to Use LLMs vs Autonomous AI Agents

Best Practices for Large Language Model Implementation

Deploy LLMs when your primary need involves understanding or generating text without requiring actions in external systems. Content creation, document analysis, conversational interfaces, and language translation represent ideal use cases.

Cost considerations favor LLMs for text-only applications. Since they don't require complex integrations or persistent infrastructure, implementation remains straightforward. The AI system responds to prompts and generates text without needing access to databases or business platforms.

Effective LLM implementation requires:

Clear prompt engineering that provides necessary context

Appropriate context management within token limits

Understanding of model capabilities and limitations

Monitoring for output quality and relevance

Best Practices for Deploying Agentic AI and Automation

Choose AI agents when tasks require autonomous operation, decision-making across multiple steps, or integration with business systems. Operations that benefit from proactive actions rather than reactive responses particularly suit agentic approaches.

Customer support that goes beyond conversation, appointment scheduling that actually books and manages calendars, and procurement processes that execute purchases all require agentic capabilities. The AI agent must perform actions, not just generate text about what actions could be taken.

Successful agentic AI deployment demands:

Clear goal definitions that specify desired outcomes

Robust integration with relevant systems and databases

Security frameworks that control what actions agents can take

Monitoring systems that track performance and catch errors

Fallback mechanisms when the agent encounters situations it cannot handle

Integrating LLMs and AI Agents: Hybrid Automation Approaches

Modern AI solutions often combine both technologies. An AI assistant might use an LLM for natural language understanding while employing agentic architecture for task execution. This hybrid approach delivers conversational quality with operational capability.

Understanding the spectrum between basic chatbots and fully autonomous agents helps businesses make informed decisions. A customer service platform might start with LLM-powered conversations and gradually add agentic features like CRM integration, order management, and workflow automation.

Strategic considerations include:

Infrastructure requirements and technical complexity

Cost-benefit analysis comparing implementation effort to operational gains

Scalability needs as usage grows

Maintenance demands for each technology approach

Discover automation solutions that combine conversational AI with actionable workflows

The Future of Agentic AI in Autonomous Enterprise Operations

Future of AI Agents: Enhanced Function Calling and Autonomous Systems

Current developments show LLMs powering increasingly sophisticated agentic implementations. Enhanced function calling capabilities allow AI agents to use more tools with greater reliability. Multi-agent systems where several specialized agents collaborate on complex tasks are emerging across industries.

Improvements in memory and context retention will enable AI agents to maintain deeper understanding across extended interactions. Better integration frameworks will simplify connecting agentic systems with enterprise platforms, making advanced AI accessible to organizations of all sizes.

Real-World Impact: Differences Between AI Agents and Traditional Automation

The convergence of LLM intelligence with agentic capabilities creates systems that understand natural language while executing real-world tasks. Industry-specific agents with specialized knowledge bases will handle domain-specific operations autonomously.

Traditional AI required extensive programming for each scenario. Modern agentic AI can handle unexpected situations by reasoning through problems using its LLM capabilities. This flexibility transforms what's possible in automation.

Early adoption provides competitive advantages. Organizations that understand ai agents vs llms can deploy appropriate solutions faster, automate more effectively, and adapt as the technology evolves. Building ai systems that combine both approaches positions businesses for the future of ai development.

Stay informed about AI developments and implementation strategies

Making the Right Choice

LLMs generate text based on learned patterns and prompts. AI agents take autonomous actions to achieve goals using LLMs as reasoning engines. Understanding ai agents means recognizing that they build upon large language model foundations while adding perception, planning, and execution capabilities.

Modern AI platforms increasingly integrate both technologies. An LLM provides the intelligence for understanding and communication. Agentic architecture adds the ability to perceive environments, make decisions, and automate workflows. Businesses benefit from deploying the right technology for each use case.

The distinctions matter because they determine what your AI system can accomplish. Text generation requires an LLM. Process automation needs an AI agent. Complex applications such as chatbots that both converse and take action benefit from combining both approaches.

Discover how intelligent automation transforms business operations at KingCaller.ai

Common FAQs

What are the main differences between AI agents and LLMs?

LLMs generate text based on prompts and learned patterns. AI agents autonomously take actions toward goals, often using LLMs as their reasoning component while adding planning, decision-making, and execution capabilities.

Can an LLM function as an autonomous AI agent?

An LLM alone lacks agentic capabilities. It becomes part of an AI agent when integrated with systems that enable autonomous decision-making, function calling, and task execution beyond text generation.

What is agentic AI and how does it integrate automation?

Agentic ai refers to autonomous systems capable of perceiving environments, reasoning about information, and acting independently to achieve objectives through workflow orchestration and system integration.

Do AI chatbots use large language models?

Most modern chatbots use LLMs for conversational abilities. Truly agentic chatbots also integrate with business systems to execute tasks like updating records, processing transactions, and triggering workflows.

Related Blogs

Be The Change In

Customer Engagement